As a marketer that's passionate about optimization and testing, I always look forward to WhichTestWon's Annual Testing Awards.

It's a great opportunity for me to geek out on before and after A/B tests, and see how other marketers are achieving big wins in digital. In advance of this Friday's WhichTestWon Awards ceremony, I interviewed one of the judges, Justin Rondeau (follow him on Twitter), to get a sneak peek of what we can expect this year. Justin is Chief Evangelist & Editor of WhichTestWon, and he's on a mission to inspire and educate more marketers to optimize their email campaigns and web sites via testing.

That's a great mission, and I'm thrilled to introduce him to our OMI readers.

1. As you're judging this year's WhichTestWon Awards, are you noticing any trends in A/B testing?

A/B and multivariate testing for conversion optimization is exploding – both in the US and globally. We received hundreds of entries for WhichTestWon’s 4th annual Testing Awards from an incredible variety of companies big and small. Ecommerce marketers are heavily testing now, but we also saw a big uptick in entries from lead generation marketers, financial institutions, SaaSs, automotive firms, and even online newspapers. So testing has definitely gone mainstream at last.

Big Giant Photographs

Photos on landing pages, homepages, ecommerce pages, all sorts of pages have gotten bigger and bigger so in many cases they now dominate the page. In many cases, there’s no more “white space”, a single huge photo is itself page background with forms or navigation laid on top of it.

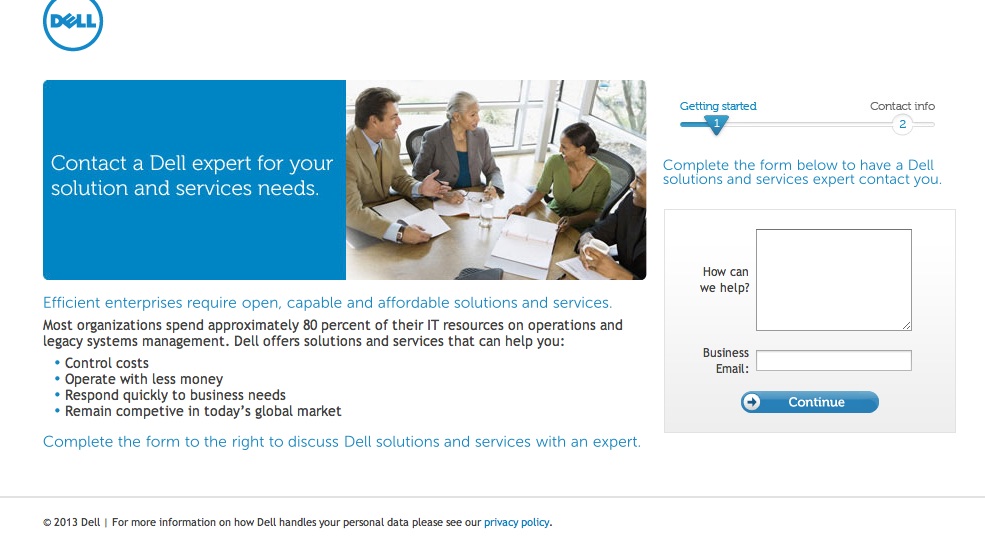

We can’t reveal winning tests until our free, live Webcast Awards ceremony on Friday Feb 15th, but to get you excited, here’s an A/B test example conducted for Dell by ion interactive where you can see this in action. The new version of this ‘contact us’ page for business visitors increased leads generated by 36% and lowered the page bounce rate by 27%.

The Control

The Winner!

The champion! The version of this ‘contact us’ page for business visitors increased leads generated by 36% and lowered the page bounce rate by 27%

Slashing Form Fields

Lots of lead generation marketers are testing cutting the number of form fields (i.e. questions) on registration, opt-in, and sign-in pages. Often this requires a lot of maneuvering in office politics behind the scenes because form fields may be dictated by your sales department who only want “qualified” leads or by your database or CRM set-up which have predetermined fields that “must” be filled out. Test results are showing it may be worth the extra work to get other departments to agree to fewer form fields. In general, you’ll get better conversions if you cut fields…. But it’s not always the case. We’re going to be revealing one Award winner who added a big fat field without hurting lead generation at all!

Less Sales Funnel Measurement

We’re sad about this trend, but expect it to reverse over time. Marketers conducting tests are less likely than they were in years past (in the case of lead generation marketers FAR less likely) to measure test results beyond an initial click or form fill. This can really hurt your company because sometimes test results can reverse when you track leads further down the sales funnel or get more stats on the size of an average ecommerce sale – so the version you thought was the winner is actually the loser.

We think this trend is occurring because so many marketers are getting into testing these days. It’s a flood of newbies who don’t have the experience to use best practices yet. Our goal is to increase education about this, so in future nobody makes this mistake.

2. What is the most common objection you hear to starting an A/B testing program?

Many marketers have told us their biggest difficulty is putting together a proof of concept for the HIPPOs to sign off on. We’ve posted a 300+ Case Study library on our site specifically to help marketers with that because they can use test examples from their competitors to impress the HIPPO.

Another issue we have seen time and time again is building an experimentation culture. Conversion rate optimization via testing makes the marketer (in some eyes) more accountable for their decisions, mainly because they are creating a test that will either be a success or a failure. If you have not built a testing culture and redefine the notion of ‘failure’ marketers won’t be enticed to think outside of the box.

3. Any plans for including multivariate tests? What are your thoughts on MVT?

We do include them – many of our winners were MVT tests as are many of the tests in our Case Study library. MVT is a great way to refine a page with tons of elements when you don’t want to wait for months of A/B tests. We prefer A/B tests for very simple tests and radical redesigns… which then you might also run MVT tests on later to tweak the winner.

4. As a marketer yourself, what is your favorite element to test?

Overlays. These are those ‘pop-ups’ that take over a page you are looking at but don’t completely obscure it. (Unlike classic pop-ups, they are not commonly blocked or banned by Google.) We got a lot of awards submissions for overlays, and we just released a Case Study about our own site’s email opt-in offer overlay test. We tested the timing of the overlay – how soon should an overlay appear after a visitor enters your site: 15-seconds, 30-seconds or 45-seconds? We were surprised to see that our site the 15-second timing worked the best! You can see the overlay for yourself at http://whichtestwon.com... Just go there and wait for 15 seconds.

5. Design or copy: what is more important when it comes to landing pages?

Copy tests are VERY powerful and often the easiest to run. If I had to pick between the two, I’d run a copy test first. But your copy can’t work if your design is too cluttered or distracting, or your images aren’t supporting it. These things go hand in hand.

6. When starting a testing program, where should marketers begin?

Look at the data to figure out what’s a problem on your site. Generally we advise that you start with a page that is working fairly well – because if you can improve results even a little that’s a big lift to your bottom line. Then on that page, use every bit of data you can get your hands on to make a hypothesis about what could be changed to help conversions. Data might be web analytics, visitor surveys, customer interviews, click data, eyetracking heatmaps… there’s all sorts of ways to get information about your page. Then consider your internal resources – can you do a copy test? Can you do a design or image test? And, what sort of testing tech will your IT team feel good about using? There’s lots of (very) inexpensive options for testing tech out there. We list them in a free vendor guide on our site. Lastly, before you get started, consider how you’re going to use the results to promote the idea of testing within your own organization, either to win your boss to the idea of more tests or of investing in an agency to help you do really great tests. Office politics play a big role in this.

7. What test has surprised you the most? Why?

You’ll find out at the Awards 😉

8. Should all marketers test? Why or why not?

As long as you have a boss who will support testing – don’t do a ‘black ops’ test and risk your job, always get management buy-in – and the page you intend to test gets enough conversions per month to support a test, you should be testing. Conversions could be sales, opt-ins, form-fills, clicks, etc. You can use a testing calculator (there are several free ones) to see how many conversions you need to wind up with a statistically conclusive test.

9. What is the most important piece of advice you can give to marketers that need to improve their conversion rate?

Sorry – I’ll be boring and say “test smart” – use the resources mentioned above to construct a solid hypothesis. If your hypothesis is strong, then it doesn’t matter if your test wins or loses…you will gain insights for future iterations.

10. How many elements should you test at once? Why?

For an A/B test you can test one per variation. (So you could run a/b/c/d/a…. etc variations all at the same time if your traffic supports it.) For a multivariate test you can test tons of different elements at the same time, but again your traffic must support it.

[wp_bannerize group="Trial"]